Initial Considerations

While our sensors have been developed for use on an aerial platform such as a multi-rotor drone or fixed-wing aircraft, some researchers have used MicaSense sensors at much lower altitudes for time lapse or single capture analysis.

The camera has a fixed-focus set to be focused at infinity; objects will be in varying degrees of focus at 1-2 meters. Since the camera has five independent lenses and imagers, the images are taken from slightly different locations. Images taken closer than 15 meters will generally have parallax errors due to these slight location differences that may result in processing artifacts and a lack of sufficient texture to combine into an orthomosaic. The same is true if you were to attempt to align the bands of an individual image.

MicaSense encourages capturing at 15 meters or higher but in some instances capturing data at lower height can still be of good quality. We recommend experimenting to find the altitude that works best for your use case.

Depending on the height of the object being imaged, single captures can be aligned and analyzed using the MicaSense image processing library. For example, you may be able to analyze a capture from 15 meters if the surface is flat, but if the frame is composed of a 10-meter tree canopy, you will have trouble aligning the bands.

Another consideration is powering and cooling the camera. The sensors are designed to be air-cooled, and are prone to overheat if left capturing in a static position without any heat dissipation. Especially if it inside an enclosure with little to not ventilation. If the camera is planned to be used continuously, we recommend that you monitor the temperature to stay well within operating temperature range.

Improving Results from the Alignment.ipynb code

By changing the pyramid_levels from 0 to 3 you can achieve a good alignment. Note that the thermal imagery isn't aligned using any kind of matching -- just using the factory calibration -- so it will be offset slightly, but it doesn't appear to impact the results much given the difference in pixel size.

This works because with pyramid_levels set to 0, the software uses the factory Rig Relatives calibration as the starting match transformation, but that assumes that the images were taken far enough away that the transformation is dominated by the angular differences between the lens directions, not by the XY offset of the imagers. When images are taken very close to the camera, these assumptions are invalid.

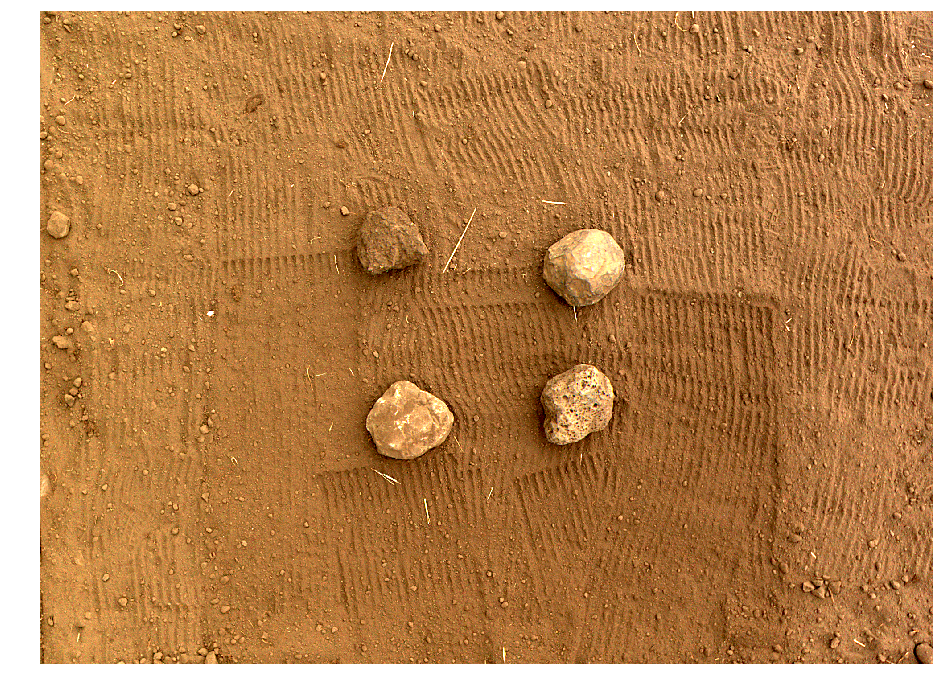

With pyramid_levels set to a non-zero value, the software decimates the imagery by the number of pyramid levels, e.g. setting pyramid_levels to 3 tells the software to create a pyramid of images downsampled by up to 2^3, and to start the matching process at the top of that pyramid (the smallest image) using an identity transform. The software tries to find a feature match at the smallest resolution, then uses this as a starting point for the next higher resolution, until it has matched at the original input resolution. Below is an example of some rocks at ~1m from the lenses, which were successfully aligned.

Please download the attached "Alignment-Rocks.zip" file for the full code run for this example.